Today is Tuesday, March 3, 2026. While Large Language Models (LLMs) are competing for supremacy in logical reasoning, Google Labs has deployed a critical front on the periphery: hyper-local language personalization. Their Slang Hang experiment is not just an educational tool; it is a demonstration of how “Cultural Context” is becoming an essential software primitive for AI infrastructure.

1. The Architecture: From “Textbook AI” to Native Conversation

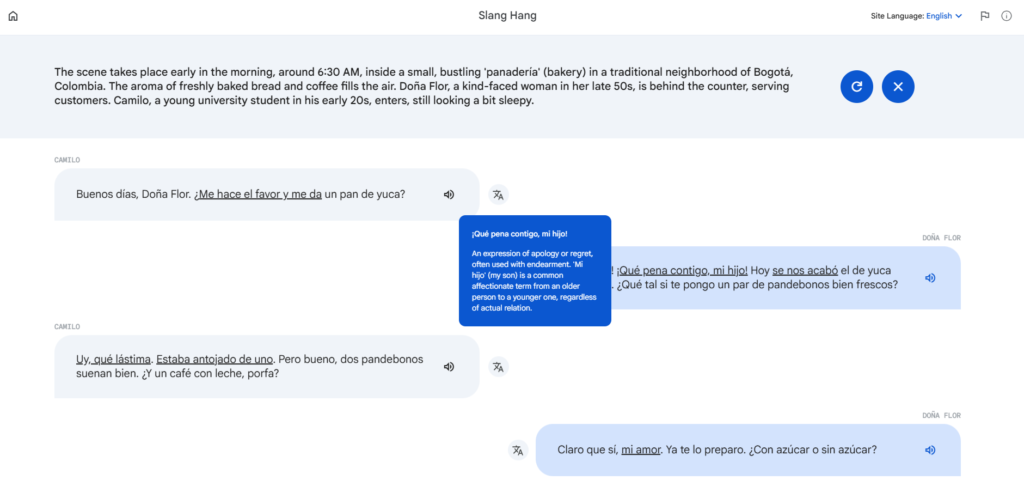

Historically, translation AI has failed at the “last mile”: informal language and regional idioms. Slang Hang (part of the Little Language Lessons suite) utilizes Gemini models to generate dynamic dialogues between imaginary native speakers, resolving the rigidity of traditional systems.

- Technical Differentiator: Unlike static translation methods, Slang Hang utilizes Dialectal Fine-Tuning. The system allows users to select not just the language, but the specific region (for example, differentiating between the Spanish of Medellín vs. Buenos Aires) to adjust lexicon and syntax.

- Headless Interaction: The engine generates scenarios—from coworkers on the subway to friends at a pet fair—and allows the user to analyze highlighted terms with their literal meaning and, most importantly, their social context of use.

Attached below is an image showing an example of the Slang Hang experiment configured for Colombian Spanish:

2. Google’s Moat: Gemini and Background Multilingualism

The viability of Slang Hang lies in Gemini’s ability to process subtle cultural signals. Google uses these experiments to test the efficiency of its APIs in low-latency, high-fidelity environments:

- Slang Generation: Mitigation of the robotic “stiffness” that plagues base models through style personalization layers.

- Object Detection (Word Cam): Integration with computer vision to feed vocabulary in real-time based on the user’s physical environment.

- Accent-Specific Text-to-Speech (TTS): The ability to replicate regional intonations, an area where the industry standard still struggles to offer a fluid and non-caricatured experience.

3. Failure Modes: Hallucination as a Feature

Even at this point in 2026, Google classifies these experiments as Alpha. For the developer, there are inherent risks:

- Slang Hallucination: The model may invent non-existent slang or misinterpret idioms that could be offensive in specific contexts.

- Zero-Trust Learning: This reinforces that AI-assisted learning requires a layer of human validation. Slang Hang does not replace the tutor; instead, it acts as a controlled cultural exposure accelerator.

⚡ Infrastructure Signals

- Personalization as a Service (PaaS): Language learning is evolving from a “one-app-for-all” model to an injectable context engine for any interface.

- Edge Deployment: Optimization for mobile suggests that the ultimate destination for these tools is the Always-On Assistant (persistent assistants in wearable devices).

💡 The dontfail! Verdict

Slang Hang confirms that software is devouring culture. We are no longer building applications for the user to learn a system; we are building systems that learn the user’s context. For the systems architect, the lesson is clear: real value does not reside in generic translation, but in mastering regional nuance.

🔗 Sources & References

- Google Developers Blog: Little Language Lessons Technical Deep Dive

- Google Labs Experiments: Slang Hang Official Lab

- Market Analysis: TechCrunch: AI Language Wingman

© 2026 dontfail.is. Analysis: AI Education | Synthesis: Google Labs | Layer: dontfail!